FAQ

These are the AI models that give humanoid robots their intelligence. Also known as Vision-Language-Action (VLA) models, they enable robots to understand and respond to human actions and language while pursuing the goals they are tasked with. The missing ingredient is training data: vast quantities of real-world human activity data, analogous to the text data that enabled the Large Language Model (LLM) breakthroughs now reshaping knowledge work.

Trace mining partners gather data from real-world task interactions during their regular daily activities. This data is then verified, labeled, and processed to create high value training data for robotic AI servitors.

The

best way to get involved now is to

become

a mining partner and collect training data

during your normal everyday activities. No

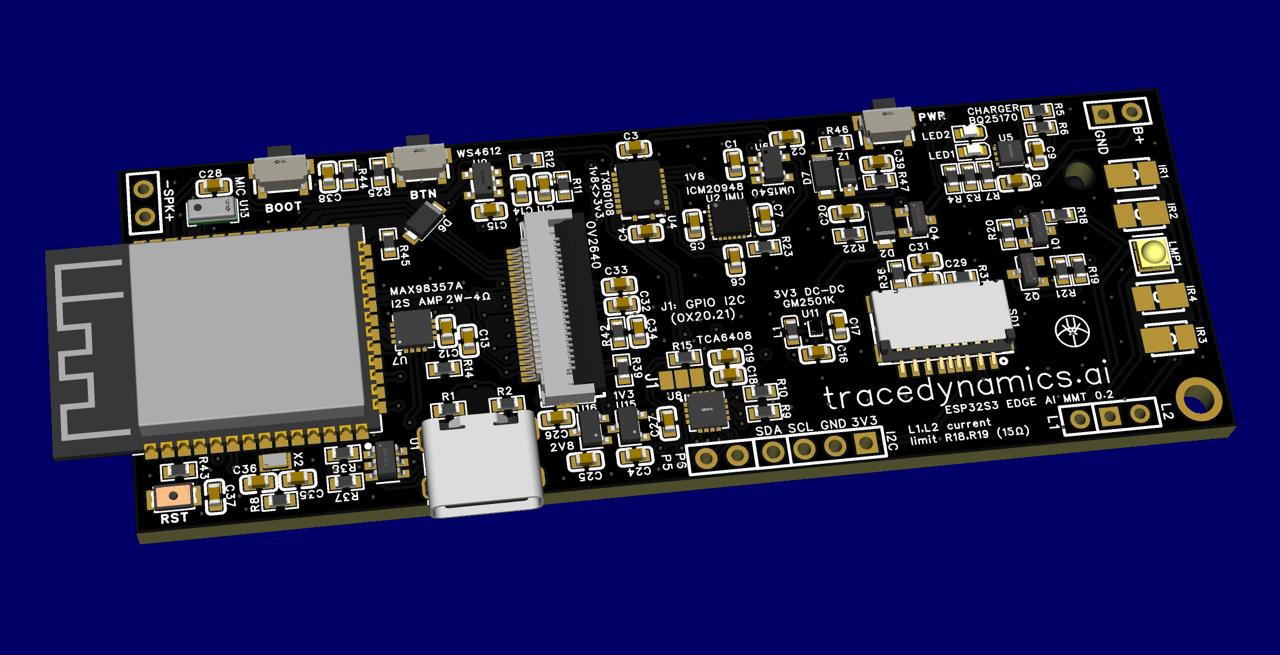

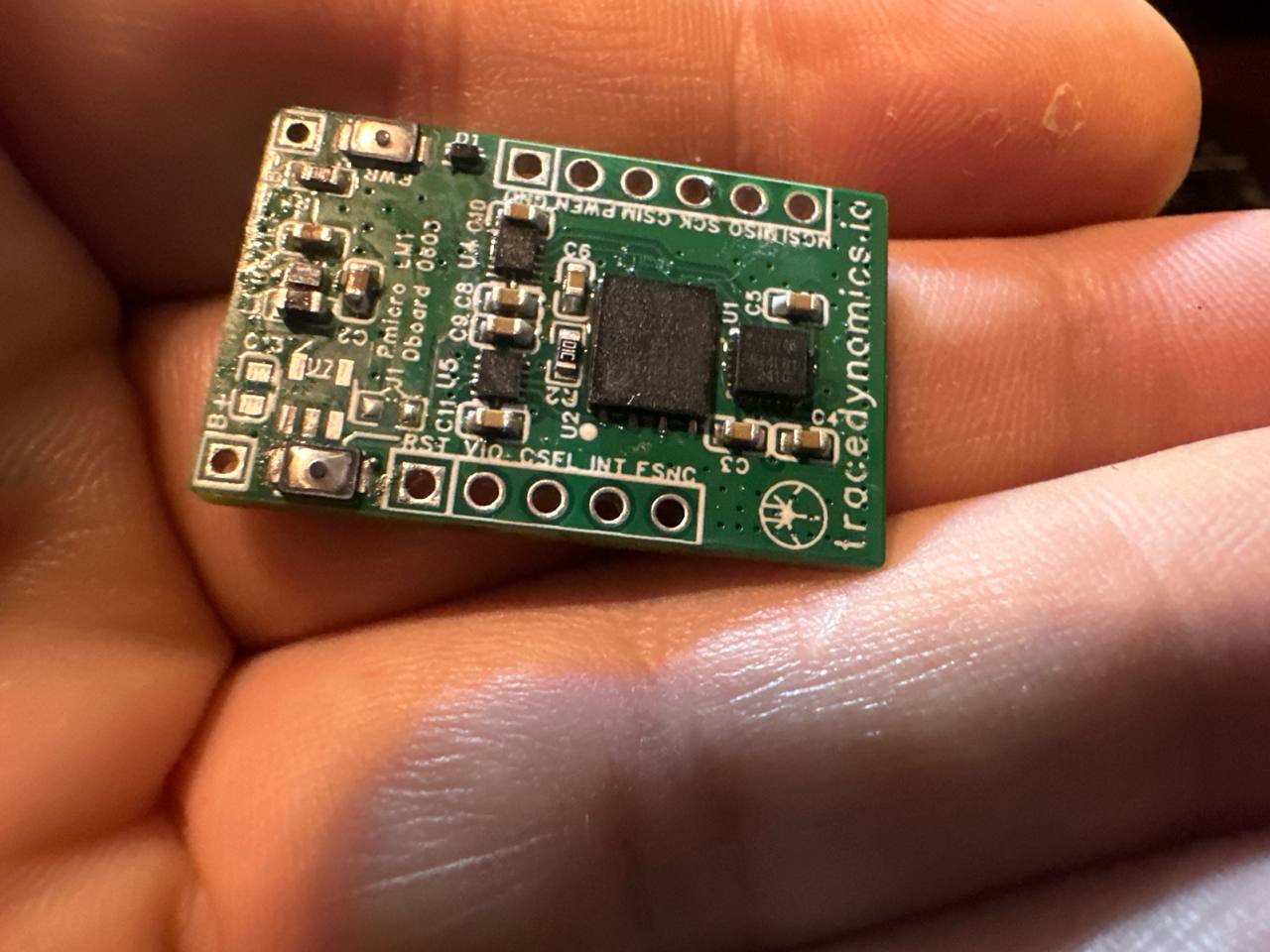

special skills or extra work required. TRACE

wearable sensors collect data automatically

during your regular activities and tasks.

Join our community channels to stay current

and position yourself for early access.

Early adopters earn at up to 64x the standard

rate, so early involvement matters.

By

becoming a mining partner, you can contribute to

the data WELL and own an ongoing share of

commercial licensing revenues from the TRACE

training dataset.

Based on current market projections, each

data-hour of quality training data could result

in an annual dividend from an estimated $23 to

$170*. Early adopters will be compensated at up

to 64x the regular rate in the early stages of

data collection, earning 64x hours of revenue

share.

As an example, a person collecting 100 hours of

training data could earn an annual dividend of

$2300* to $17,000*, depending on the adoption

and success of general purpose robotics. As an

early contributor, earnings could be as much as

32x higher or more, up to 64x for the first

public contributors - potentially resulting in

annual returns of up to $1472 - $10880* per hour

spent collecting training data.

*all figures are speculative and depend upon

successful licensing of the WELL dataset to

commercial users.

A market brief explaining the basis of our

segment projections is available for

review in the market projections.